GPT-5.2

GPT-5.2

SAMaudio

SAMaudio

Text-to-audio (TTA) generation, synthesizing audio from natural language, has been widely studied for its ability to capture precise user intent. To effectively advance TTA models, it is essential to reliably evaluate generated audio without relying on costly human subjective ratings, motivating the development of automatic evaluation metrics that correlate well with human judgments. While recent CLAP-based metrics provide practical reference-free solutions, their coarse-grained text–audio similarity matching often correlates poorly with human ratings.

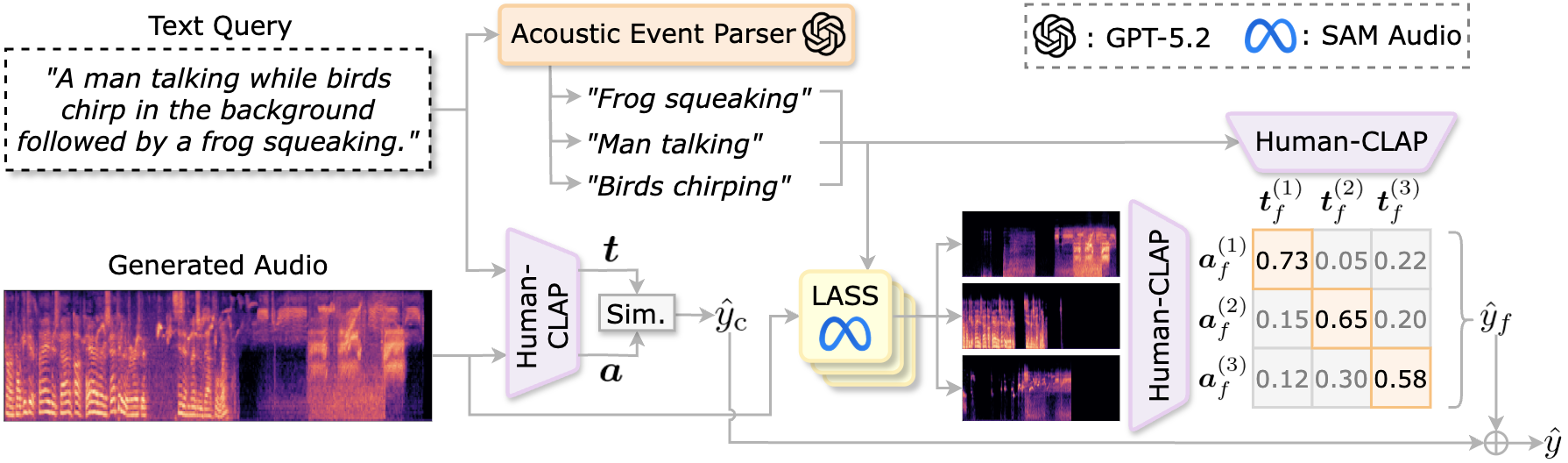

To address this, we propose ELSA, a reference-free evaluation metric for fine-grained text–audio alignment. ELSA decomposes generated audio guided by distinct acoustic events derived from the text query and assesses event-level alignment. Experiments across four TTA benchmarks show that ELSA reveals a higher correlation with human subjective ratings than prior metrics, highlighting its effectiveness for reliable TTA evaluation.

ELSA hierarchically evaluates global text–audio matching and fine-grained acoustic-event alignment by combining shared text–audio embeddings with event-level representations extracted via a text parser and a language-queried audio source separation model.

GPT-5.2

GPT-5.2

SAMaudio

SAMaudio

To be appeared.